Voice is becoming the most natural interface between humans and machines. Speech-enabled AI is reshaping customer support, in-car systems, healthcare transcription, and more. But one challenge limits its global potential: language diversity. With over 7,000 spoken languages and 80%+ of the global population communicating primarily in non-English languages, enterprises need multilingual voice AI.

The global speech and voice recognition market is growing at a CAGR of over 20% through the end of the decade. To capitalize on this growth, organizations must invest in multilingual audio annotation.

Table of Contents

The Global Demand for Multilingual Voice AI

Regions like India, Southeast Asia, Latin America, and Africa are seeing explosive growth in voice-first digital services powered by regional languages. Enterprises deploying multilingual voice solutions report higher engagement, improved accessibility, and stronger customer trust. Achieving this performance is fundamentally a data challenge, not a model limitation.

Why Multilingual Audio Annotation Is Critical

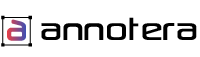

Multilingual audio annotation goes beyond verbatim transcription. It includes language and dialect identification, speaker diarization and role labeling, time-stamped transcriptions, code-switching detection, and noise, emotion, and intent tagging.

Models trained on diverse, well-annotated multilingual datasets achieve lower word error rates and improved robustness across accents and low-resource languages. This is why enterprises rely on specialized audio annotation companies rather than building annotation pipelines internally.

Key Challenges in Multilingual Audio

Dialect and Accent Variation

A single language can have dozens of regional dialects with distinct pronunciation, vocabulary, and grammar. Annotation teams need native-level fluency in specific dialects, not just the standard form.

Code-Switching

Bilingual speakers frequently switch between languages mid-sentence. Annotators must tag language boundaries accurately so models learn to handle mixed-language input.

Low-Resource Languages

Many commercially important languages lack large publicly available datasets. Annotation partners must source and train native-speaker annotators for languages with limited existing resources.

Cultural Resonance

Every language has its own rhythm, emotional emphasis, and conversational norms. Literal translations fail to capture how users express urgency, politeness, or frustration. Multilingual audio annotation services enable AI models to learn from native speech patterns and culturally appropriate intonation.

How Annotera Supports Multilingual Audio Annotation

Annotera provides native-speaker annotation teams across 30+ languages. Our multilingual pipelines combine language-specific quality guidelines, dialect-aware annotator assignment, and cross-language consistency checks. We support the full annotation spectrum from transcription and diarization to intent and sentiment tagging.

Conclusion

Multilingual audio annotation is the foundation of globally scalable voice AI. By investing in diverse, high-quality annotated datasets, enterprises build voice systems that work for every user — regardless of language, accent, or dialect.

Need multilingual audio annotation for your global voice AI initiative? Contact Annotera to get started.