Why Orientation Is the Hardest Problem in 3D Video Annotation

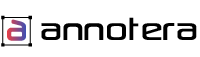

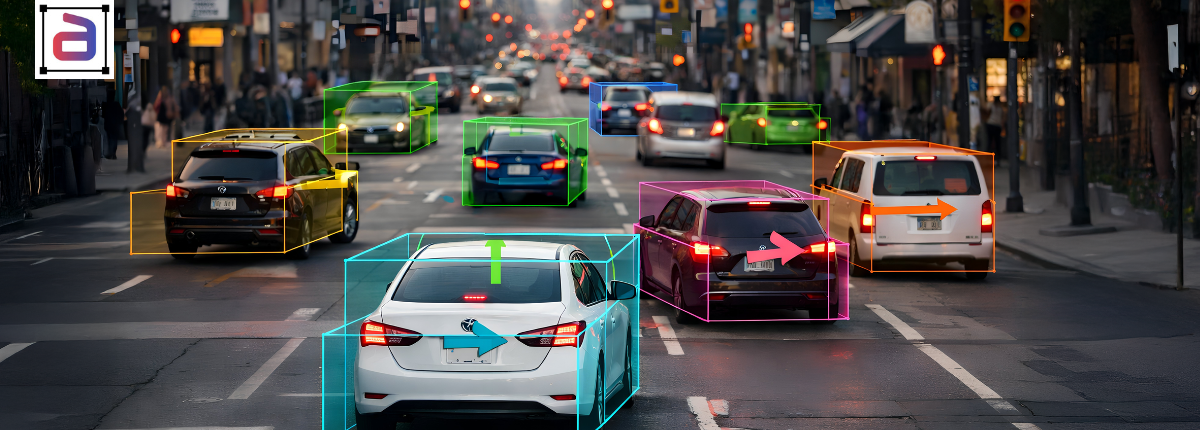

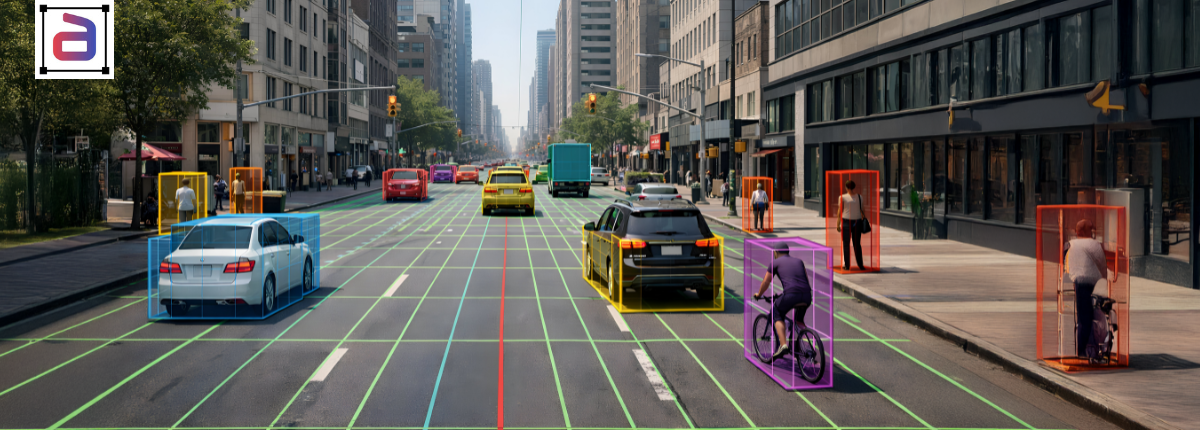

In 3D perception systems, detecting objects is only the first step. Understanding how those objects are oriented in space—how they are rotated, aligned, and positioned relative to the environment—is significantly more complex. For video-based AI systems, this challenge is amplified by motion, occlusion, and changing viewpoints across frames. 3D cuboid video labeling enables precise annotation of objects in three-dimensional space across video frames. It helps models understand object orientation, depth, and movement—essential for solving complex orientation challenges in advanced video labeling workflows.

This is where 3D cuboid video labeling becomes both essential and difficult. Orientation errors in cuboid annotation can lead to inaccurate depth estimation, unstable tracking, and poor downstream performance. For researchers and advanced ML teams, solving the orientation challenge is critical to building reliable 3D perception models.

What Orientation Means in 3D Cuboid Video Labeling

In the context of 3D cuboid video labeling, orientation refers to how an object is rotated and aligned within three-dimensional space over time. This typically includes yaw, pitch, and roll, as well as alignment with the ground plane or sensor frame.

Accurate orientation labeling enables models to:

- Understand object pose and heading

- Predict movement direction

- Distinguish between similar objects with different alignments

- Improve interaction and navigation decisions

Without consistent orientation data, 3D cuboids lose much of their semantic and spatial value.

Why Orientation Errors Degrade 3D Video Models

Orientation inaccuracies introduce noise that propagates through perception pipelines. Orientation errors can significantly degrade the performance of 3D video models. When object angles are inaccurately labeled, models learn incorrect spatial relationships. As a result, prediction accuracy declines. Therefore, maintaining precise orientation annotations is essential for improving object tracking, depth estimation, and overall model reliability.

Common consequences include:

- Incorrect motion and trajectory prediction

- Poor alignment in sensor fusion systems

- Increased identity switches in tracking

- Reduced reliability in navigation and planning modules

In video-based systems, even small orientation inconsistencies across frames can destabilize temporal learning.

Technical Challenges in Orientation Annotation

Labeling orientation accurately in video is technically demanding due to several factors. Orientation annotation presents several technical challenges, particularly when objects rotate, overlap, or move rapidly across frames. Moreover, variations in camera angles and depth perception can affect accuracy. Therefore, maintaining consistent cuboid alignment and implementing validation checks are essential for ensuring reliable 3D video annotation outcomes.

Key challenges include:

- Ambiguous object geometry from certain viewpoints

- Occlusion and partial visibility

- Sensor noise and sparse depth information

- Rapid object rotation or direction changes

- Maintaining consistency across long sequences

These challenges make orientation one of the most error-prone aspects of 3D cuboid video labeling.

Techniques for Improving Orientation Accuracy in Video Cuboids

Professional 3D video annotation workflows use multiple techniques to improve orientation accuracy. Techniques for improving orientation accuracy in video cuboids focus on refining object alignment across frames. For example, annotators can use keyframe interpolation and perspective-guided adjustments. Additionally, leveraging automated validation tools and consistent annotation guidelines helps reduce errors and ensures more reliable 3D object tracking in complex video datasets.

Common approaches include:

- Ground-plane alignment rules

- Temporal smoothing across frames

- Keyframe-based orientation labeling

- Manual correction of automated orientation drift

- Cross-sensor validation using LiDAR or depth data

These methods help ensure orientation remains stable and meaningful throughout video sequences.

Impact of Orientation Accuracy on Model Training and Inference

High-quality orientation annotation directly improves both training outcomes and real-world performance. Orientation accuracy significantly influences both model training and inference in computer vision systems. Properly aligned images enable models to better interpret spatial features and patterns, reducing prediction errors and improving performance. Implementing robust image preprocessing and orientation correction methods can further enhance dataset quality and overall model reliability.

Benefits include:

- More accurate trajectory prediction

- Improved object interaction modeling

- Reduced false positives in tracking

- Greater stability in downstream planning systems

For research teams working on advanced 3D perception, orientation quality often determines whether models generalize beyond controlled environments.

Quality Metrics for Orientation in 3D Video Annotation

Orientation accuracy can be measured using specific quality metrics. Quality metrics for orientation in 3D video annotation help ensure precise object alignment across frames. For instance, evaluating angle consistency, cuboid alignment, and temporal stability improves annotation reliability. Furthermore, applying these metrics allows teams to identify labeling errors early and maintain higher dataset quality for robust model training.

Common evaluation criteria include:

- Angular error tolerance

- Orientation consistency across frames

- Alignment with ground truth sensor data

- Inter-annotator agreement on pose labeling

Tracking these metrics allows teams to systematically improve 3D cuboid video labeling services quality.

Integrating Orientation-Aware Cuboids into Research Pipelines

For researchers, orientation-aware cuboid annotation must integrate seamlessly into experimentation and training workflows. Integrating orientation-aware cuboids into research pipelines improves the accuracy of spatial annotations and enhances model learning. Moreover, these cuboids enable better tracking of object rotation and depth. Consequently, researchers can build more reliable datasets and achieve improved performance in complex 3D video analysis tasks.

Well-structured 3D cuboid video labeling supports:

- Pose estimation models

- Multi-object tracking and prediction systems

- Sensor fusion research

- Simulation and digital twin environments

Consistent orientation data makes it easier to test hypotheses, compare models, and reproduce results.

Annotera’s Approach to Orientation-Accurate 3D Video Annotation

Annotera provides advanced 3D cuboid video labeling services designed to handle orientation complexity at scale.

Our approach includes:

- Orientation-specific annotation guidelines

- Temporal QA focused on pose stability

- Sensor-assisted orientation validation

- Research-ready output formats

This ensures annotated video data supports rigorous experimentation and production deployment alike.

Conclusion: Orientation Precision Is the Key to Reliable 3D Video AI

Orientation is one of the most challenging—and most impactful—elements of 3D video annotation. Without accurate pose information, even well-detected objects fail to behave correctly in AI systems.

By prioritizing orientation accuracy in 3D cuboid video labeling and partnering with a specialized annotation service provider, research teams can unlock more stable, realistic, and deployable 3D perception models. Contact us to improve the 3D perception accuracy with expert 3D cuboid video labeling services.