As digital experiences move beyond screens and keyboards, gestures are becoming a primary mode of interaction. From touchless kiosks and smart environments to AR/VR and accessibility tools, gesture-based interfaces allow users to communicate intent naturally through movement. Keypoint detection for AI enables models to track human joints and movements across video frames, forming the foundation for accurate gesture recognition, motion analysis, and real-time interaction systems.

For AI systems to recognize gestures reliably, they must understand subtle temporal patterns in human motion. This capability depends heavily on keypoint detection for AI, where accurately labeled joint and landmark data teaches models how gestures begin, evolve, and conclude in real-world video.

What Is Keypoint Detection for AI?

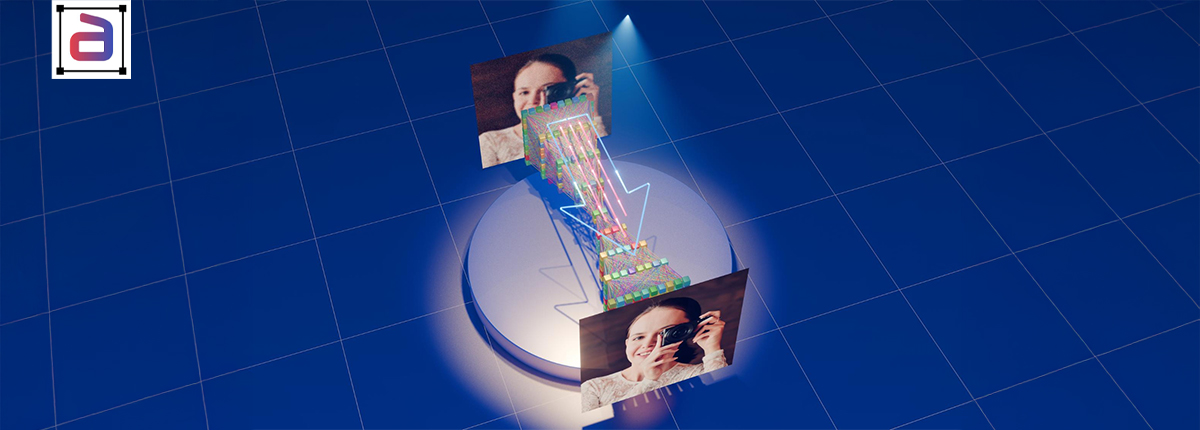

Keypoint detection for AI refers to the process of identifying and tracking specific human landmarks—such as hands, arms, torso, and facial points—within video frames. These landmarks form the foundation for recognizing gestures, posture changes, and intent-driven movement. Keypoint detection for AI involves identifying and tracking specific points on objects—such as joints or facial landmarks—using keyword annotation to label critical features, enabling models to understand structure, motion, and spatial relationships for tasks like pose estimation and gesture recognition.

In a service-led annotation context, keypoint detection for AI is enabled through:

- Precise keypoint annotation across video frames

- Temporal alignment of landmarks

- Multi-keypoint relationship mapping

- Dataset-agnostic outputs for model training

This approach ensures that gesture recognition models learn from accurate, structured motion data.

How Keypoints Enable Gesture Recognition

Gestures are defined not by static poses, but by motion sequences. Keypoints enable gesture recognition by mapping critical body joints across frames; consequently, models capture motion patterns more accurately. Furthermore, this structured data improves temporal analysis, allowing AI systems to distinguish subtle gestures and interpret complex human movements effectively. Keypoints allow AI models to capture:

- Direction and velocity of movement

- Relative positioning between joints

- Timing and duration of gestures

- Transitions between gestures and idle states

By learning these patterns, models trained with keypoint detection data can distinguish intentional gestures from random motion.

UX and Product Use Cases for Gesture-Based AI

Gesture-based AI enhances UX by enabling touchless interactions, intuitive navigation, and accessibility features across devices. It is widely used in gaming, AR/VR, automotive controls, and smart interfaces—see industry applications and design guidelines via external resources.

Touchless Interfaces

Gesture recognition enables hygienic, hands-free interaction in public kiosks, healthcare settings, and industrial environments.

Accessibility and Assistive Technologies

Keypoint-based gesture detection supports inclusive interfaces for users with mobility or speech limitations.

Smart Environments and IoT

AI systems can interpret gestures to control lighting, appliances, or displays without physical input.

AR/VR and Immersive Experiences

Accurate gesture recognition enhances realism and responsiveness in immersive digital environments.

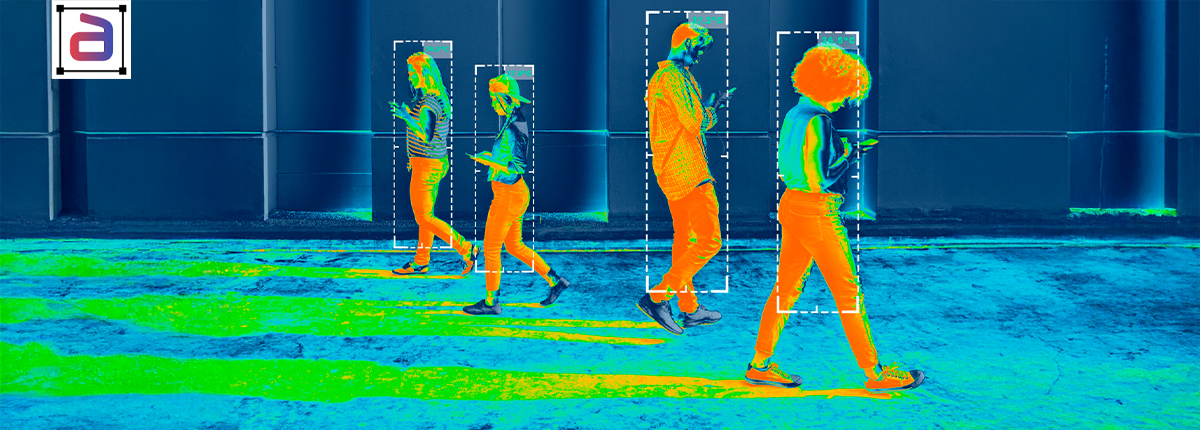

Annotation Challenges in Gesture Recognition Systems

Annotation challenges in gesture recognition systems arise from occlusions, motion blur, and varying viewpoints; moreover, inconsistent labeling reduces accuracy. Consequently, maintaining temporal consistency and precise keypoint placement becomes difficult, especially when annotating complex or overlapping human movements. Training gesture-aware AI introduces several annotation challenges:

- Subtle Motion: Small hand or finger movements carry meaning

- Occlusion: Hands overlap with objects or move out of frame

- User Variability: Gestures vary across users and cultures

- Temporal Precision: Gesture boundaries must be defined accurately

Addressing these challenges requires carefully designed keypoint annotation strategies.

Annotation Strategies for Gesture-Focused Models

Annotation strategies for gesture-focused models emphasize consistent keypoint labeling and temporal alignment; moreover, incorporating diverse scenarios improves robustness. Additionally, using standardized guidelines and iterative quality checks ensures higher accuracy and better model generalization across complex gesture variations.

High-Frequency Keypoint Labeling

Dense temporal labeling ensures that rapid gestures are captured accurately. High-frequency keypoint labeling captures rapid motion changes across consecutive frames; consequently, models learn smoother temporal transitions. Moreover, consistent sampling improves accuracy, enabling gesture-focused AI systems to detect subtle movements and perform reliably in dynamic, real-time scenarios.

Multi-Keypoint Relationship Modeling

Annotating relationships between hands, arms, and torso improves gesture interpretation. Multi-keypoint relationship modeling analyzes spatial and temporal dependencies between joints; consequently, models better understand coordinated movements. Moreover, capturing these relationships improves gesture interpretation, enabling AI systems to recognize complex actions rather than isolated keypoint positions.

Context-Aware Annotation Guidelines

Rules account for background motion and non-gesture activity. Context-aware annotation guidelines consider scene dynamics, occlusions, and user intent; consequently, annotations remain consistent across scenarios. Moreover, incorporating contextual cues improves labeling accuracy, enabling gesture recognition models to interpret movements more reliably in real-world environments.

Temporal Validation

Sequences are reviewed end-to-end to ensure smooth motion representation. Temporal validation ensures consistency of keypoint annotations across sequential frames; consequently, models learn coherent motion patterns. Moreover, validating annotations over time reduces jitter and errors, improving the reliability of gesture recognition in dynamic, real-world scenarios.

Why UX Teams Outsource Keypoint Annotation Services

UX teams outsource keypoint annotation services to scale datasets efficiently and reduce operational overhead; moreover, specialized vendors ensure consistency and quality. Consequently, teams can focus on design and innovation while accelerating gesture-based AI development. UX and product teams often partner with annotation service providers to:

- Accelerate prototyping and iteration

- Ensure consistent gesture labeling

- Improve model generalization across users

- Reduce internal annotation overhead

A specialized service partner enables faster deployment of gesture-enabled experiences.

Annotera’s Keypoint Annotation Services for Gesture AI

Annotera’s keypoint annotation services for gesture AI deliver precise, scalable labeling for complex motion data; moreover, standardized workflows ensure consistency. Consequently, businesses achieve higher model accuracy, faster deployment, and reliable performance across diverse gesture recognition use cases. Annotera supports gesture recognition initiatives with service-led keypoint annotation:

- Annotators trained on human motion and interaction patterns

- Custom gesture and keypoint schemas

- Multi-stage QA for temporal accuracy

- Scalable workflows for video-heavy datasets

- Dataset-agnostic services with full client data ownership

Conclusion: Designing AI That Understands Human Intent

Gesture recognition represents a shift toward more intuitive human–computer interaction. However, AI systems can only interpret gestures as well as the data they are trained on. In conclusion, designing AI that understands human intent requires accurate annotation and contextual modeling; moreover, integrating gesture recognition enhances interaction. Consequently, businesses can build intuitive, responsive systems that align closely with real human behavior and expectations.

By leveraging professional keypoint detection for AI, UX teams can build models that recognize human gestures accurately, adapt across users, and deliver seamless interactive experiences. With the right annotation strategy and partner, gestures become a reliable input—not a source of friction.

Building gesture-enabled products or touchless interfaces?

Annotera’s keypoint detection for AI services help UX teams train models that understand human motion with precision. Talk to Annotera to design gesture-focused keypoint schemas, run pilots, and scale video annotation for gesture recognition.