Artificial intelligence has advanced rapidly—larger models, faster compute, and increasingly complex architectures dominate industry conversations. Yet when AI systems fail to deliver expected outcomes in real-world environments, the root cause is rarely the algorithm itself. More often, it is the quality of the data powering the model. Further, data Annotation for AI Models ensures machines learn from accurate, consistent, and context-rich labels. By transforming raw data into reliable training inputs, it directly improves model accuracy, scalability, and real-world performance across enterprise AI applications.As AI adoption accelerates across enterprises, the demand for accurate, scalable training data has intensified. Data annotation outsourcing enables organizations to access specialized expertise, ensure consistent labeling, and build AI models that perform reliably in complex, real-world environments.

At the center of that data foundation lies data annotation. Accurate, consistent, and context-aware labeling is what transforms raw data into intelligence machines can learn from. Moreover without it, even the most advanced AI models struggle to generalize, scale, or earn business trust. This is why data annotation is not just a supporting function—it is the keystone of effective AI models.

Table of Contents

Data Annotation for AI Models: From Raw Data to Real Intelligence

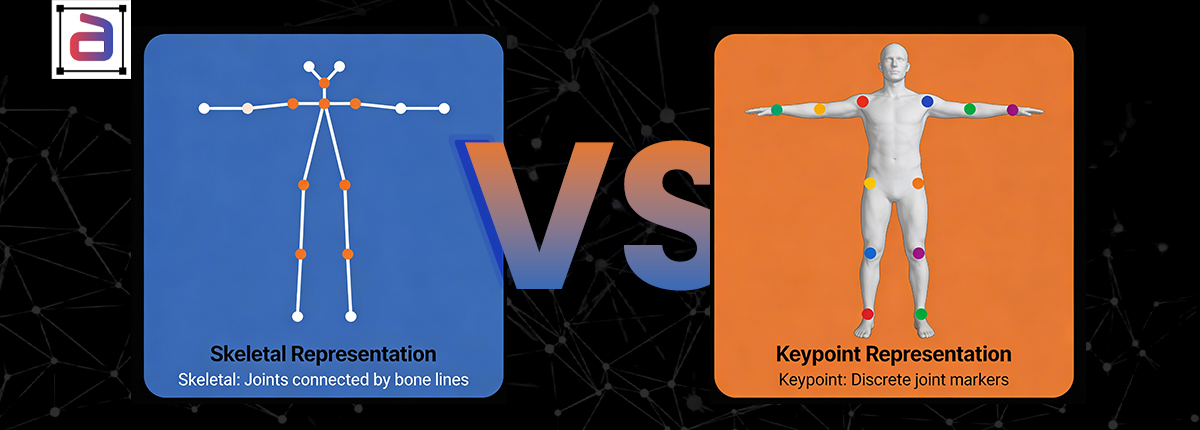

Most enterprise data is unstructured—text, images, video, audio, and sensor data. On its own, this information holds little value for machine learning systems. Data annotation adds structure and semantic meaning by applying human judgment to define what matters within the data.

- Identifying objects, defects, or regions of interest in images

- Tagging intent, entities, and sentiment in text data

- Labeling speakers, emotions, and timestamps in audio files

- Tracking actions, behaviors, and events across video frames

Each label becomes a learning signal. Together, they define how an AI model interprets the world. Poorly defined or inconsistent labels distort that understanding—no matter how advanced the model architecture may be.

Why Data Annotation for AI Models Drives AI Performance

In theory, AI models learn patterns. In practice, they learn exactly what the data teaches them—biases, gaps, and ambiguities included. Further, many AI performance issues can be traced directly to annotation quality.

Inconsistent Annotation

Vague or poorly documented guidelines lead to inconsistent labeling. When annotators interpret the same data differently, models learn contradictions rather than reliable patterns.

Missing Edge Cases

Rare but business-critical scenarios—fraud attempts, system failures, or abnormal user behavior—are often underrepresented in training datasets. Models then fail precisely when accuracy matters most.

Weak Quality Assurance

Without structured QA processes such as gold standards, peer reviews, and adjudication, annotation errors propagate silently across datasets.

Ontology and Label Drift

As projects evolve, label definitions often change. Without strong governance, datasets become fragmented, making retraining inefficient and unreliable.

Industry research consistently shows that poor data quality costs organizations millions annually through rework, delayed deployments, and unreliable AI outputs.

How Data Annotation for AI Models Solves AI Performance Gaps

The AI industry is undergoing a clear shift from model-centric experimentation to data-centric AI. Increasingly, the fastest performance gains come not from changing algorithms, but from improving training data quality.

Data-centric AI focuses on:

- Clear annotation guidelines and well-defined ontologies

- Balanced and representative datasets

- Systematic capture of edge cases

- Ongoing measurement of label accuracy and consistency

- Alignment between business objectives and labeling strategy

This approach is especially critical in enterprise and regulated environments, where explainability, fairness, and auditability are essential.

The Business Impact of High-Quality Annotation

Annotation quality has a direct and measurable impact on AI ROI:

- Faster time to production: Clean, consistent labels reduce retraining cycles.

- Greater stakeholder trust: Reliable data leads to stable and explainable predictions.

- Lower operational costs: Fewer errors mean less rework and wasted compute.

- Reduced risk: Strong governance supports compliance and data security.

Data annotation is not a cost center—it is a strategic performance driver.

What Enterprise-Grade Data Annotation Looks Like

When annotation is precise, consistent, and aligned to real-world edge cases, models generalize better, drift less, and deliver measurable business outcomes. When annotation is rushed or loosely governed, even sophisticated architectures can underperform. Also, industry signals reinforce this point. Gartner estimates poor data quality costs organizations an average of $12.9 million per year. Whether managed internally or through data annotation outsourcing, high-performing AI teams follow consistent best practices:

- Clearly defined label taxonomies and decision rules

- Trained and continuously calibrated annotators

- Multi-layer quality assurance frameworks

- Measurable quality metrics and feedback loops

- Security, confidentiality, and compliance by design

Maintaining this level of rigor at scale is challenging, which is why many organizations partner with a specialized data annotation company.

How Annotera Builds AI-Ready Datasets

At Annotera, we treat data annotation as an engineering discipline—not a transactional task. Our structured approach to data annotation outsourcing is designed to support production-grade AI systems. Although, data annotation for AI Models forms the foundation of accurate, scalable intelligence by transforming raw data into structured learning signals, enabling enterprises to train reliable AI systems that perform consistently across real-world scenarios.

We help organizations:

- Design precise annotation guidelines and ontologies

- Deploy trained annotators aligned to domain requirements

- Implement rigorous QA and adjudication workflows

- Scale annotation throughput without compromising accuracy

- Protect sensitive data through enterprise-grade security controls

From early experimentation to large-scale production and evaluation datasets, Annotera operates as an extension of your AI team—focused on long-term performance and trust.

Why AI Performance Starts with Data Annotation for AI Models

AI success does not begin with models. It begins with decisions—decisions encoded into data through annotation. When those decisions are clear, consistent, and aligned with real-world complexity, models perform better, scale faster, and deliver meaningful business impact.

In an era where AI differentiation is increasingly defined by data quality, high-quality annotation is no longer optional. Instead, it is the keystone holding the entire system together.

Build Stronger AI Models with Annotera

If your AI initiatives are slowed by noisy labels, inconsistent datasets, or scaling challenges, it’s time to strengthen your data foundation. Partner with Annotera—a trusted data annotation company—to bring structure, quality, and governance to your AI training data through expert-led data annotation outsourcing. Let’s turn your data into dependable intelligence.