Keeping online platforms safe requires timely intervention to prevent harmful content from spreading. As volumes grow, platforms rely on automated systems to quickly flag risks, while human reviewers provide judgment and accountability. In this balance, content moderation labeling supplies the structured intelligence that enables both automated filtering and effective human review.

For the general tech audience, understanding how these approaches work together clarifies why proactive safety depends on data, design, and governance, not on technology alone.

Table of Contents

Why Proactive Moderation Matters

Reactive moderation addresses harm after exposure, which can damage users and erode trust. Proactive systems aim to identify risk early, reducing reach and impact. Proactive content moderation is essential for identifying harmful, misleading, or inappropriate material before it reaches users. By addressing risks early, businesses can protect brand reputation, improve user trust, ensure platform safety, and maintain compliance with community guidelines while supporting a healthier and more reliable digital environment.

Consequently, platforms prioritize early detection mechanisms supported by high-quality labeled data.

What Automated Filtering Does Well

Automated filtering applies machine learning models to scan content at scale. As a result, platforms can process vast volumes in near real time.

Key strengths include:

- Speed and scalability

- Consistent application of rules

- Immediate prioritization of high-risk content

However, automation depends heavily on the quality of its training data.

Where Human Review Adds Value

Human reviewers evaluate context, intent, and edge cases that models struggle to interpret. Therefore, human judgment is essential for fairness and accuracy.

Human review supports:

- Resolution of borderline or ambiguous content

- Policy interpretation and escalation

- Appeals and user trust mechanisms

Together, these capabilities prevent over-enforcement and bias.

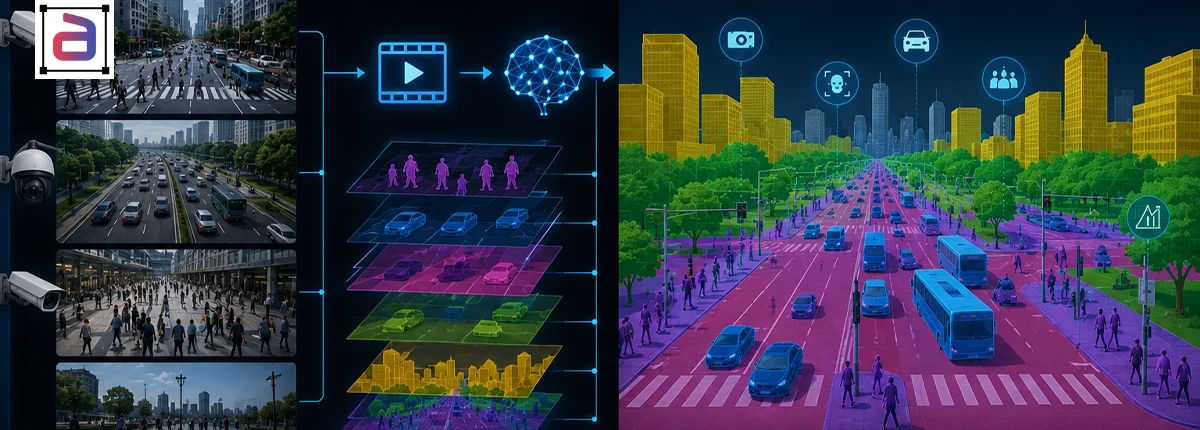

How Content Moderation Labeling Connects Both Approaches

Content moderation labeling defines categories, severity levels, and contextual cues that guide both automated systems and human reviewers. As a result, labels become the shared language across moderation pipelines.

Well-designed labeling enables:

- Accurate model training

- Efficient reviewer decision-making

- Continuous feedback between humans and AI

Designing Hybrid Moderation Pipelines

Clear Escalation Thresholds

Labels determine when content moves from automation to human review.

Feedback Loops

Reviewer decisions inform model retraining and refinement.

Governance and Transparency

Documented labels support audits and explainability.

Challenges in Balancing Automation and Humans

Over-automation risks unfair takedowns, while over-reliance on humans limits scalability. Additionally, poorly defined labels create inconsistency.

However, expert-managed labeling frameworks address these risks systematically.

Why Expert-Managed Labeling Matters

Expert-managed content moderation labeling ensures policy alignment, consistency, and quality control across both automated and human workflows.

As a result, platforms maintain proactive safety without sacrificing fairness or efficiency.

How Annotera Supports Proactive Moderation

Annotera delivers content moderation labeling through governed workflows designed for hybrid moderation systems. Multi-layer QA ensures labels remain accurate, consistent, and policy-aligned.

Consequently, platforms gain moderation intelligence that supports proactive safety at scale.

Conclusion

Proactive platform safety is not a choice between automation and human review. It is a coordinated system built on reliable data.

Through content moderation labeling, platforms align automated filtering and human judgment to prevent harm while preserving trust.

Building proactive moderation systems that balance scale and judgment? Partner with Annotera for expert-managed content moderation labeling designed for hybrid, future-ready safety operations.