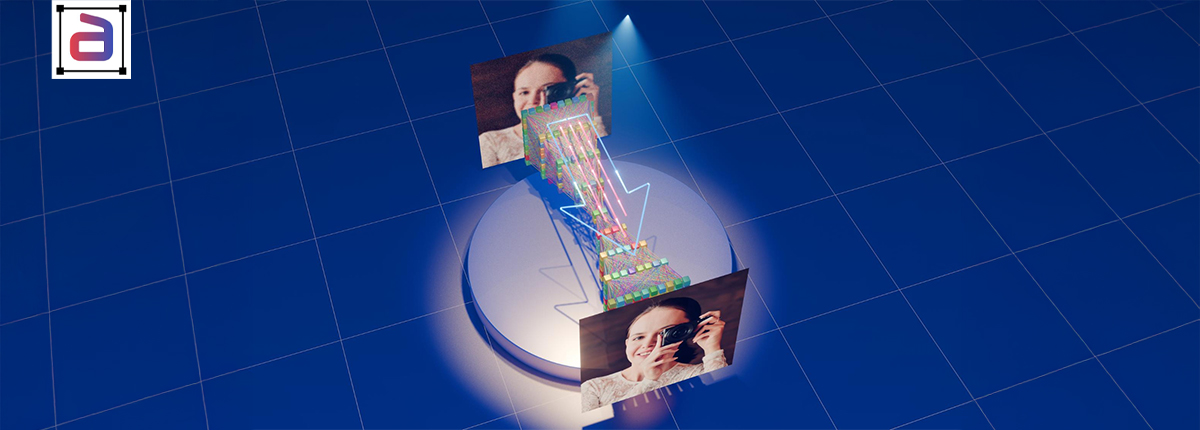

In autonomous systems, perception accuracy determines safety, reliability, and decision-making confidence. While object detection identifies what is present, true environmental understanding requires pixel-level insight. As a result, semantic segmentation services have become a core capability for autonomous driving teams that must simultaneously interpret roads, vehicles, pedestrians, signage, and surroundings.

Rather than relying on approximate boundaries, semantic segmentation assigns a class label to every pixel in an image. Consequently, models gain a holistic and context-aware understanding of complex driving environments.

Why Autonomous Driving Demands Pixel-Level Understanding

Autonomous vehicles operate in highly dynamic environments where split-second decisions matter. Therefore, understanding precise spatial relationships between objects, lanes, sidewalks, and obstacles is essential.

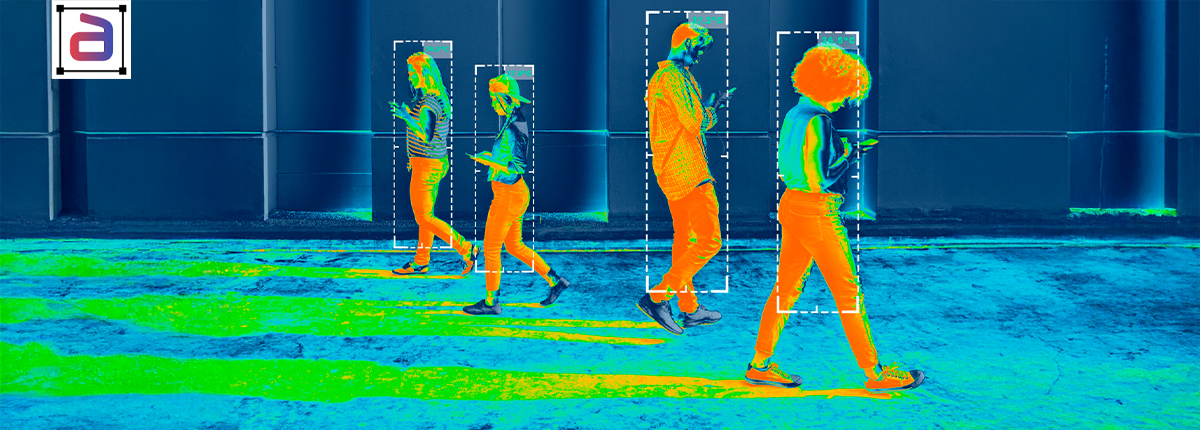

Semantic segmentation enables autonomous vehicles to differentiate between drivable surfaces and non-drivable areas, detect lane boundaries in poor visibility, and interpret complex urban scenes. As a result, perception systems become more robust across weather, lighting, and traffic conditions.

What Semantic Segmentation Services Deliver

Semantic segmentation services involve labeling every pixel in an image according to a predefined class taxonomy. Unlike bounding boxes or polygons, this approach eliminates ambiguity by providing complete scene coverage.

Because of this completeness, autonomous driving models can reason about context, continuity, and spatial hierarchy rather than just isolated objects.

Key Autonomous Driving Use Cases

Road and Lane Detection

Pixel-level labeling allows precise identification of lanes, curbs, and road edges, even when markings are faded or partially occluded.

Obstacle and Surface Classification

Semantic segmentation distinguishes vehicles, pedestrians, cyclists, vegetation, and static infrastructure, thereby improving path planning.

Urban Scene Understanding

In dense city environments, segmentation helps models interpret intersections, crosswalks, traffic islands, and signage in context.

Challenges in Autonomous Segmentation Projects

Despite its value, semantic segmentation is resource-intensive. High annotation effort, large image volumes, and complex class taxonomies introduce operational challenges.

However, when managed correctly, these challenges are outweighed by gains in perception accuracy and system safety.

Why Managed Semantic Segmentation Matters

Autonomous driving teams often require large, consistently labeled datasets collected across regions and conditions. Managed semantic segmentation services provide trained annotators, standardized guidelines, and scalable delivery models. Managed semantic segmentation ensures consistent, high-quality pixel-level annotations at scale, reducing model bias and improving accuracy. It streamlines workflows, enforces QA standards, and accelerates deployment.

As a result, engineering teams can iterate faster while maintaining confidence in data quality.

How Annotera Supports Autonomous Segmentation Programs

Annotera delivers semantic segmentation services through governed workflows, domain-trained annotation teams, and multi-layer quality assurance. Each dataset is validated for consistency, completeness, and class accuracy.

Consequently, autonomous driving teams receive production-ready data that supports real-world deployment.

Conclusion

Pixel-level clarity is fundamental to the safe and reliable operation of autonomous systems. By leveraging semantic segmentation services, teams can train models that understand not just objects, but entire driving environments.

In autonomous driving, semantic segmentation is not optional. It is foundational.

Building perception systems for autonomous vehicles or ADAS platforms? Partner with Annotera for expert-managed semantic segmentation services designed for safety-critical environments.