Digital platforms operate under constant pressure to keep users safe while supporting open expression. As user-generated content grows in volume and complexity, manual review alone cannot keep pace. In this environment, content moderation services provide the structured labeling foundation that enables platforms to enforce policies consistently, respond to risk quickly, and scale trust and safety operations.

For trust and safety leads, content moderation labeling is not just a compliance requirement. It is a core capability for sustainable platform growth.

Table of Contents

Why Platform Safety Becomes Harder at Scale

User-generated content spans text, images, video, and audio across languages and cultures. Consequently, harmful material can appear in subtle forms, including coded language or contextual abuse.

As platforms grow, inconsistent enforcement increases risk exposure and erodes user trust. Therefore, scalable moderation requires both automation and high-quality labeled data.

What Content Moderation Services Deliver

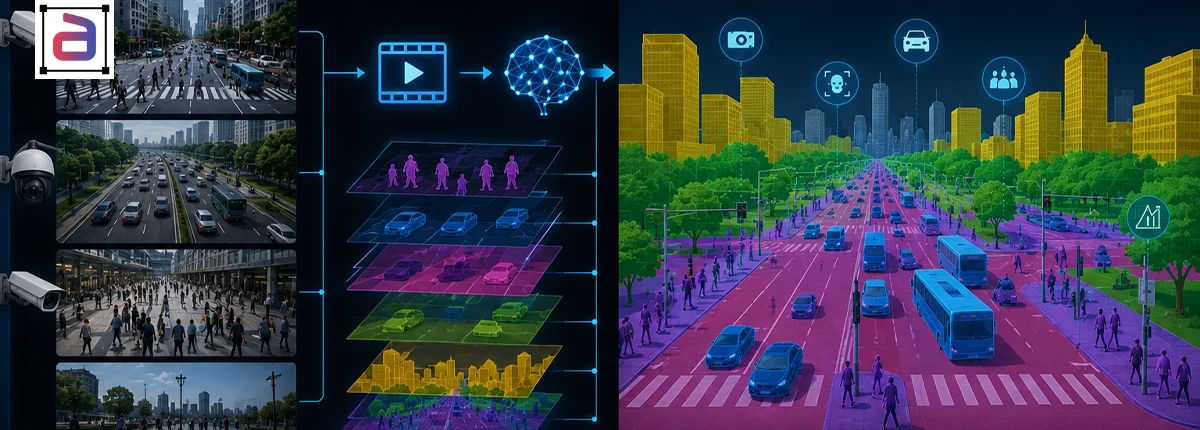

Content moderation services classify content into policy-defined categories, including hate speech, harassment, violence, adult content, and misinformation. As a result, AI systems learn to accurately detect and route risky content. Content moderation deliver accurate detection and filtering of harmful, abusive, misleading, and policy-violating content across digital platforms. Effective content moderation improves user safety, strengthens brand trust, supports compliance, and enables scalable monitoring of text, images, audio, and video content.

Modern moderation labeling often includes:

- Policy-aligned category tagging

- Severity and confidence scoring

- Context-aware escalation flags

These signals support fast, consistent moderation decisions.

Enabling Scalable Trust and Safety Operations

Faster Triage and Response

Labeled data allows automated systems to prioritize high-risk content for immediate review.

Consistent Policy Enforcement

Standardized labels ensure uniform application of community guidelines.

Reduced Reviewer Burnout

Automation-supported moderation lowers exposure to harmful content.

Challenges in High-Volume Content Moderation

Policy interpretation can vary, edge cases are frequent, and content evolves rapidly. Additionally, adversarial users attempt to bypass detection.

However, with expert-managed labeling and continuous policy calibration, platforms maintain accuracy at scale.

Why Expert-Managed Moderation Labeling Matters

Expert-managed content moderation services provide trained reviewers, policy alignment, and multi-layer quality assurance.

As a result, trust and safety teams gain reliable training data that supports both automation and human review.

How Annotera Supports Platform Safety Programs

Annotera delivers content moderation services through governed workflows designed for high-volume, policy-sensitive environments. Multi-layer QA ensures labeling consistency, accuracy, and audit readiness.

Consequently, platform teams receive content moderation data that scales with growth and regulatory expectations.

Conclusion

Scaling platform safety requires more than reactive review. It requires structured moderation intelligence built into content pipelines.

Through content moderation services, platforms achieve faster response, consistent enforcement, and safer digital environments.

Managing trust and safety at scale? Partner with Annotera for expert-managed content moderation services designed to support high-accuracy labeling and scalable platform safety.