As platforms scale, content moderation increasingly relies on automation to handle volume and speed. However, fully automated systems struggle with nuance, context, and evolving policy interpretations. In this environment, human-led content moderation provides a critical advantage by combining machine efficiency with human judgment.

For operations VPs, human-in-the-loop moderation is not a fallback strategy. It is a deliberate design choice that balances scale, accuracy, and accountability.

Table of Contents

Why Automation Alone Falls Short

Automated content moderation systems perform well on clear violations but falter on edge cases involving sarcasm, cultural references, or ambiguous intent.

Consequently, overreliance on automation leads to false positives, user frustration, and policy-enforcement risk. Therefore, human judgment remains essential.

What Human-Led Content Moderation Delivers

Human-led content moderation integrates trained reviewers into workflows to validate and refine automated decisions. As a result, platforms achieve higher accuracy without sacrificing throughput.

Key capabilities include:

- Contextual review of borderline content

- Policy interpretation and escalation

- Feedback loops that improve model performance

These capabilities create resilient moderation systems.

How Human-in-the-Loop Improves Outcomes

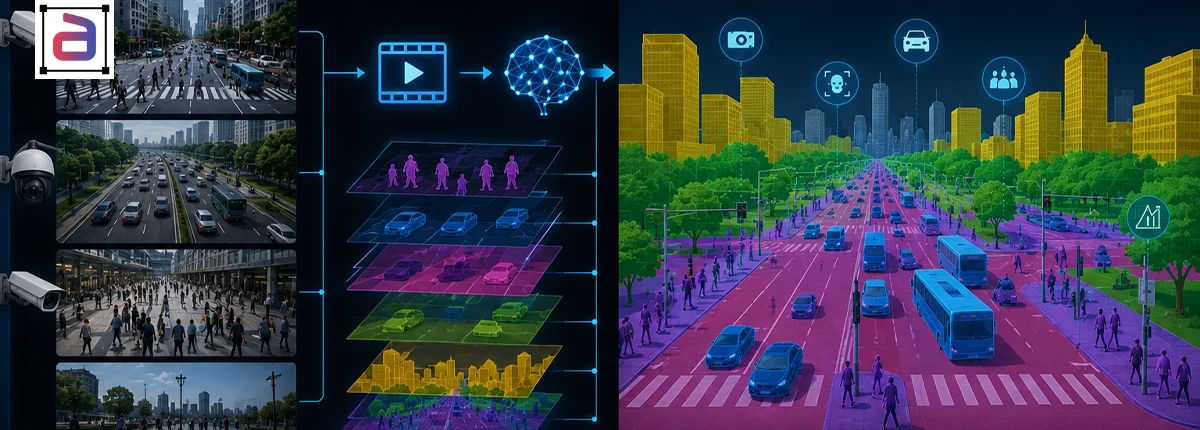

Human-in-the-loop workflows significantly enhance annotation accuracy by combining machine efficiency with human judgment. Experts review, correct, and validate automated outputs, reducing errors in edge cases and complex scenarios. This collaborative approach improves data quality, strengthens model performance, and ensures more reliable outcomes for computer vision and AI training systems.

Higher Precision and Fairness

Human reviewers resolve ambiguity that models cannot reliably interpret.

Continuous Model Improvement

Reviewer feedback informs retraining and policy updates.

Regulatory and Audit Readiness

Documented human oversight supports compliance and transparency.

Operational Considerations for Human-Led Moderation

Scaling human review requires structured workflows, reviewer training, and mental health safeguards. Additionally, consistency depends on clear guidelines and quality controls.

However, when designed properly, human-led moderation scales predictably.

Why Expert-Managed Review Teams Matter

Expert-managed content moderation programs provide trained reviewers, calibrated policies, and multi-layer quality assurance.

As a result, operations leaders maintain control over moderation quality while meeting volume demands.

How Annotera Supports Human-in-the-Loop Moderation

Annotera delivers human-led content moderation through governed workflows that integrate automation with expert review. Multi-layer QA ensures consistent decisions and continuous improvement.

Consequently, platforms achieve safer environments without compromising efficiency.

Conclusion

Trust and safety depend on judgment as much as technology. Automation accelerates moderation, but humans ensure it remains fair and defensible.

Through content moderation, platforms build systems that adapt to nuance, policy change, and real-world complexity.

Designing scalable moderation workflows that require human judgment? Partner with Annotera for expert-managed human-led content moderation built for accuracy, resilience, and operational scale.