IoT devices are getting better at sensing the world — but most still struggle to understand it. A microphone can capture sound. The hard part is turning messy, real-world audio into reliable signals. That’s what audio classification services enable: helping IoT products recognize soundscapes and events to respond intelligently. Connected IoT devices are projected to reach 21.1 billion by the end of 2025. As IoT scales, audio becomes one of the most valuable sensors — adding context that temperature, motion, or GPS can’t provide.

“Sound is the most underused sensor in IoT. It’s always available, and it carries context—if you can classify it.”

Table of Contents

What Are Audio Classification Services?

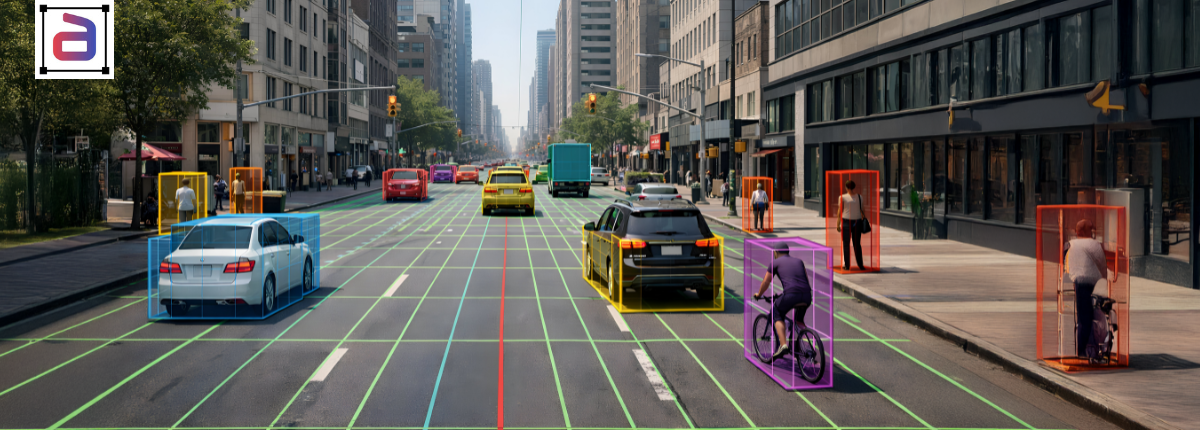

Audio classification assigns a label to an audio clip based on what it contains. In IoT, this means classifying acoustic scenes (“street,” “kitchen,” “factory floor”) and acoustic events (“siren,” “alarm,” “machinery fault”). Audio classification services build the labeled training data your models need — consistently, at scale, and aligned to your device environment.

Audio Classification vs Similar Tasks

Speech recognition answers “what was said?” Speaker ID answers “who spoke?” Audio classification answers “what sound or scene is this?” Sound event detection answers “when does a sound happen?” If your IoT product needs environmental awareness rather than speech understanding, classification is the core building block.

Here’s the quick difference, because these terms get mixed up:

| Task | What it answers | Example output |

|---|---|---|

| Speech recognition (ASR) | What was said? | “Turn on the lights” |

| Speaker ID | Who spoke? | “Speaker: User A” |

| Audio classification | What sound (or scene) is this? | “Scene: street” |

| Sound event detection | When does a sound happen? | “Siren: 03.2s–06.1s” |

If your IoT product needs awareness (not words), classification is the core building block.

Why IoT Teams Are Investing in Audio Now

Three trends are colliding. IoT is exploding in scale. Edge AI is becoming practical for always-on inference. Sound recognition is becoming a serious commercial category. For developers, that means: if your device can classify its environment, it makes better decisions with fewer sensors. Learn more in our audio classification guide.

What Soundscapes Mean in IoT

A soundscape is the audio fingerprint of an environment. A kitchen isn’t just “noise” — it’s a mix of appliance hums, human movement, water running, utensils clinking, and occasional speech. The goal of soundscape classification is to teach models to recognize these composite audio signatures and respond accordingly.

Common IoT Soundscape Categories

Most IoT products start with 8–20 scenes and expand over time:

| Scene | Typical environments | Product value |

|---|---|---|

| Home (quiet) | bedrooms, living rooms | occupancy + safety |

| Kitchen | homes, cafeterias | appliance monitoring |

| Street | outdoor urban | mode switching |

| Vehicle | cars, buses | hands-free UX + safety |

| Industrial | factories, warehouses | monitoring + alerts |

| Retail | stores, malls | security + experience |

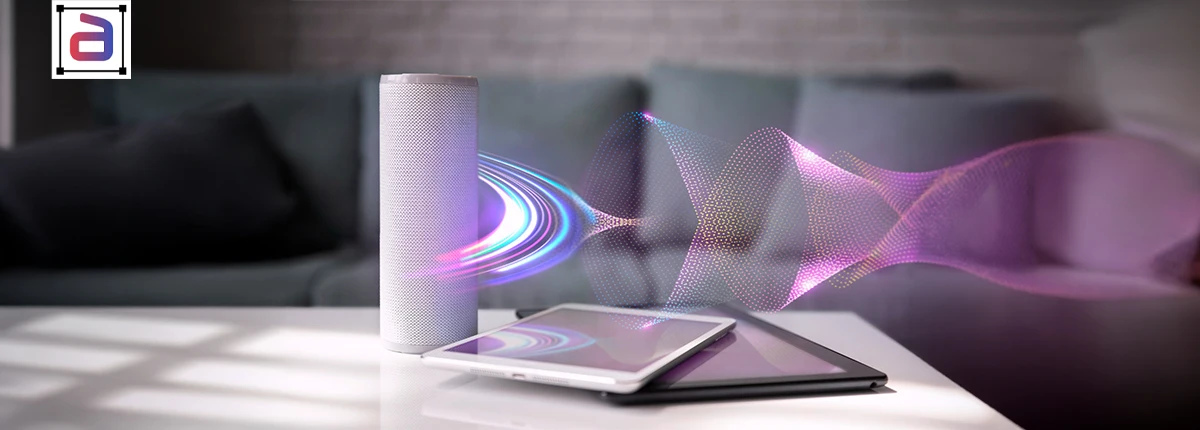

Typical Sounds Classified by IoT Teams

IoT teams classify diverse acoustic events to power intelligent monitoring systems. For example, they label machinery noise, footsteps, speech, alarms, and environmental sounds. Additionally, detecting anomalies like glass breaks or equipment faults enables predictive maintenance, while contextual sound analysis improves safety, automation, and real-time decision-making across connected environments. IoT audio classification usually combines scene labels and event labels.

Event Categories That Matter Most

Here’s a practical set of categories IoT teams frequently adopt:

| Category | Examples | Where it’s used |

|---|---|---|

| Mechanical | fan, motor, compressor | predictive maintenance |

| Environmental | wind, rain, traffic | outdoor device adaptation |

| Human activity | footsteps, voices, cough | occupancy + wellbeing |

| Safety signals | alarms, sirens, smoke alarm | home + industrial safety |

| Impact events | glass break, crash, bang | security + incident response |

“In IoT, accuracy isn’t the only goal. Consistency is. A model that’s ‘sometimes right’ is a product risk.”

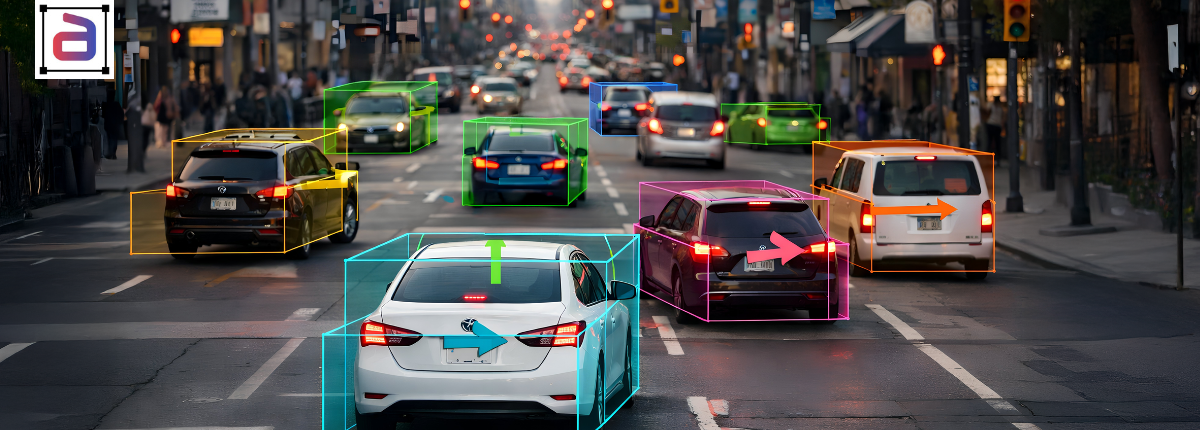

How Audio Classification Makes IoT Devices Smarter

Audio classification isn’t “nice to have.” It directly improves reliability and usability.

1) Context-aware Automation

Instead of hard-coded rules (“if motion then X”), devices can adapt based on a recognized scene:

- If street, ignore indoor acoustic triggers

- If vehicle, adjust wake sensitivity and filtering

- If industrial, prioritize alarms and hazard signals

Industrial noise labeling involves tagging machine sounds, alarms, and ambient acoustic events so AI systems can interpret complex industrial soundscapes. This structured audio annotation improves anomaly detection, safety monitoring, and predictive maintenance performance in high-noise operational environments.

2) Fewer False Triggers

Many IoT systems fail because they react to irrelevant audio:

- a vacuum triggers “intrusion”

- a TV triggers “conversation”

- wind triggers “movement”

Well-trained classifiers reduce false positives—especially when your labeling captures real-world variation.

3) Better Edge Performance

Audio classification lets you run lightweight models on-device and send only high-value events to the cloud.

That helps with:

- bandwidth costs

- latency requirements

- privacy constraints

The Biggest Challenge: Real-world Audio Is Messy

IoT audio is rarely clean.

You’ll deal with:

- overlapping sounds (speech + traffic + music)

- device-specific artifacts (microphone hiss, gain jumps)

- different rooms, buildings, and materials

- regional differences in soundscapes

- rare events that matter most (alarms, hazards)

This is why “generic datasets” often underperform in IoT. Moreover,the model needs to learn your target environment. Further,that’s where professional audio classification services help most: converting messy, domain-specific audio into reliable training signals.

How A Production-ready Audio Classification Workflow Works

Let us now look at the service workflows that IoT teams can actually deploy:

Step 1: Define A Sound Taxonomy That Matches Your Product

You don’t want 300 labels on day one. You want labels that map to actions.

A good taxonomy answers:

- What decisions will the device make from this label?

- Which sounds cause costly errors today?

- Which scenes are most common in your user environments?

Step 2: Choose Label Granularity

For IoT, the common options are:

| Granularity | What it means | Best for |

|---|---|---|

| Clip-level | one label per clip | scene classification |

| Segment-level | labels over time spans | events + behaviors |

| Frame-level | highly precise timing | advanced detection |

Step 3: Label and QA With Consistency Rules

Audio labeling fails when rules are vague.

High-quality programs define:

- overlap rules (multi-label allowed?)

- minimum event duration

- priority labels (alarm beats music)

- edge cases (TV speech vs real speech)

Step 4: Deliver model-ready outputs

Typical output formats include:

- timestamped CSV/JSON

- per-frame label arrays

- metadata bundles (device type, SNR band, environment tag)

Why IoT teams outsource audio classification

If you’re building a product, in-house labeling quickly becomes a bottleneck. Further, to eliminate these bottlenecks, IoT teams outsource because:

- Volume is large (always-on audio produces massive data)

- Consistency is hard without trained annotators and QA

- Iteration is constant (labels evolve as the product evolves)

- Engineering time is expensive—and better spent on models, deployment, and UX

“Annotation is a pipeline. If it’s not scalable and repeatable, it won’t survive production.”

What to look for in audio classification services

If you’re evaluating an global audio annotation partner, focus on operational outcomes, not promises.

A Quick Evaluation Checklist

| Capability | Why it matters |

|---|---|

| Custom taxonomies | IoT environments are domain-specific |

| Overlap-aware labeling | Audio can contain sensitive content |

| QA with agreement checks | prevents label drift |

| Tool flexibility | fits your pipeline |

| Security controls | Audio can contain sensitive content |

How Annotera Supports IoT Audio Classification

Annotera provides specialized audio annotation to train classification models for real-world IoT environments. Our teams label acoustic scenes and events with precise temporal boundaries, handle overlapping sounds and edge cases, and deliver datasets aligned to your specific device and deployment context. Moreover, regional audio annotation captures dialectal, accent, and pronunciation variability that standard speech datasets overlook. Incorporating these localized speech patterns into training data strengthens ASR robustness, minimizes dialect bias, and enables voice systems to perform reliably across diverse linguistic and geographic populations.

What that means in practice:

- Custom sound taxonomies aligned to device actions

- Scene + event labeling (clip/segment/frame options)

- Overlap-aware multi-label audio tagging where needed

- Human QA with consistency checks

- Dataset-agnostic delivery: we label your audio; we don’t sell datasets

Business Impact: Why This Matters Now

Audio intelligence is becoming a competitive advantage—especially as IoT grows.

- IoT connections are projected to grow rapidly, reaching 21.1B devices by the end of 2025.

- Cellular IoT alone is forecast to reach 4.5B connections by the end of 2025, underscoring the number of devices operating in diverse, noisy environments.

- The sound recognition market is projected to grow significantly through 2030, reflecting the accelerating adoption of audio-aware systems.

For IoT product teams, the takeaway is simple:

Moreover, if your device can reliably identify soundscapes, it can deliver smarter experiences with fewer sensors, fewer false alarms, and better real-world performance.

Conclusion: Turn Sound Into A Signal

Audio classification services turn raw sound into structured intelligence for IoT devices. By accurately labeling soundscapes and events, teams build models that understand their environment and respond in real time.

Need labeled audio data for your IoT classification models? Contact Annotera to get started.